The 6 Levels of AI Fluency: Where Do You Stand?

Level 0 - Bystander

Why a Framework Matters

Somebody asks you “how do you use AI?” and you freeze. You can at least say you use AI, but explain how you use it? In detail? You know that saying “sporadically, I guess” is not the answer you should give anymore.

Also, notice how the question has shifted from “if…” to “how…” over the last couple years? Usage is assumed. People expect to hear more now. Trade tips, anecdotes, etc.

The problem is that most people don’t know how to answer that question, not even the ones asking. There really isn’t a structured way to think about AI proficiency. You’ve “used ChatGPT a few times” but you can’t tell whether that makes you ahead of the curve, behind it, or on par.

Because we have no language for it. No scale. No reference points.

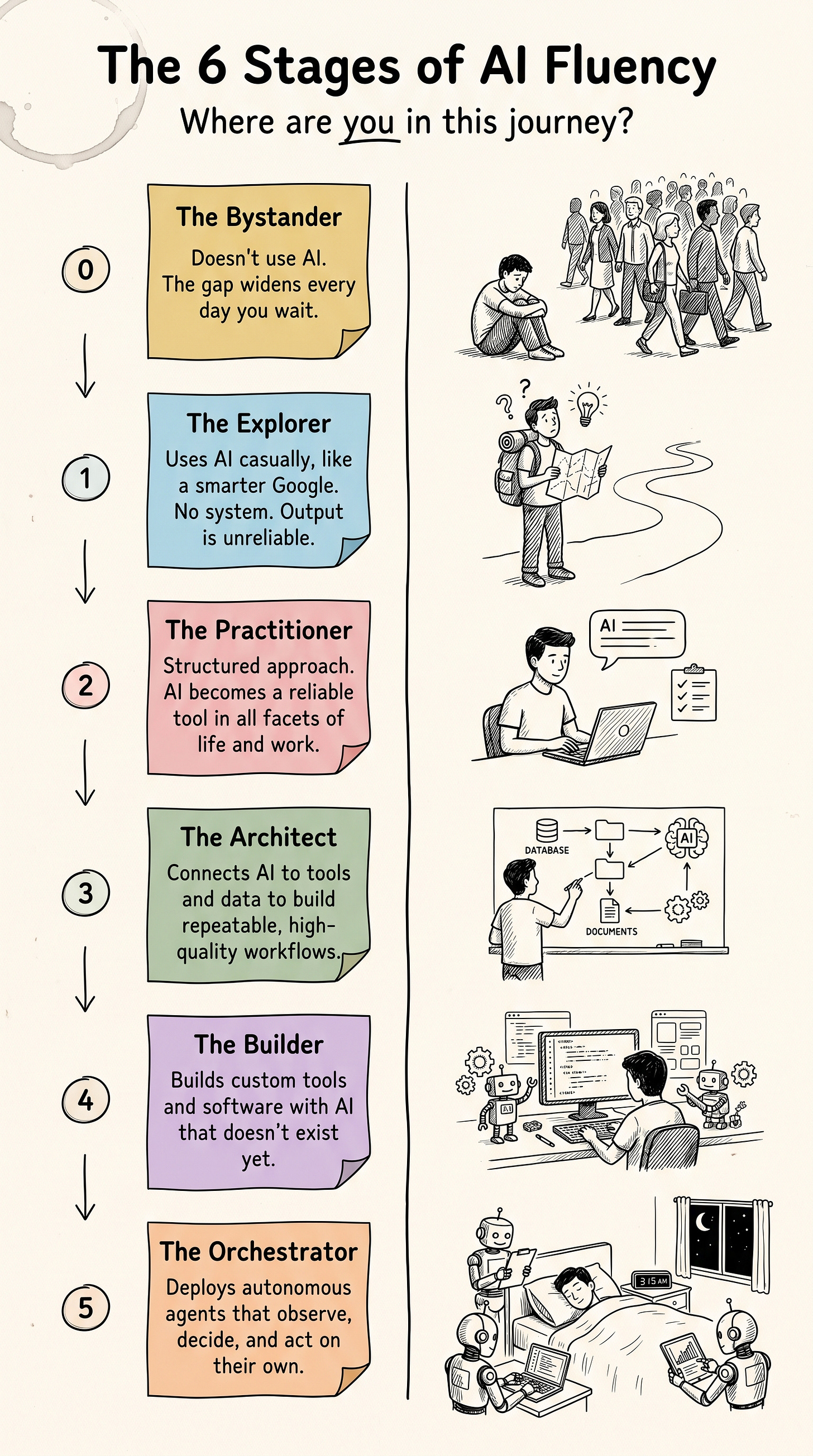

So I stole the best one and repurposed it for AI: Dreyfus’s 5-stage model of skill acquisition, which has shaped how medicine, aviation, and the military train experts for 40+ years. But I added a Level 0, the Bystander, because Dreyfus assumed you've at least started. With AI, half the problem is starting.

That's what this framework gives you: goal posts for structured progression.

So why frame things as structured progression at all? Because it solves the two biggest failure points in learning:

The overwhelm problem. You can’t eat the entire elephant in one sitting. Structured stages act as a complexity filter. You only deal with what you’re ready for. Think of it like progressive overload in training: you don’t walk into a gym and load 300 pounds on the bar day one.

The plateau problem. Without escalating goals, people settle into comfortable competence and stop growing. And usually, comfortable competence means the beginner stage repeated over and over. Just good enough to tread water. Structured progression builds in goal escalation automatically, with each stage demanding more than the last.

But don’t take my word for it. Here is the evidence.

Goal-setting research (i.e., Locke and Latham, validated across 35+ years and hundreds of studies) tells us specific, challenging goals lead to higher performance 90% of the time compared to vague “do your best” or “figure out what works best for you” goals. A proficiency framework gives you the specific targets and focus that “just get better at AI” never will.

And the stages take inspiration from Stuart and Hubert Dreyfus’s five-stage model of skill acquisition, which go from novice to expert. This model has been applied across medicine, aviation, and professional development for over four decades.

Staged proficiency models work because they make invisible progress visible. You shouldn’t underestimate being able to articulate an answer to: “where are you right now, and what does the next step actually look like?”

And the urgency is real. DataCamp’s 2026 State of Data & AI Literacy report found that 59% of enterprise leaders report an AI skills gap in their organization. And that’s even though 82% of organizations already provide some form of AI training.

The training exists. The progression map doesn’t. So here’s the map.

The AI Fluency Framework

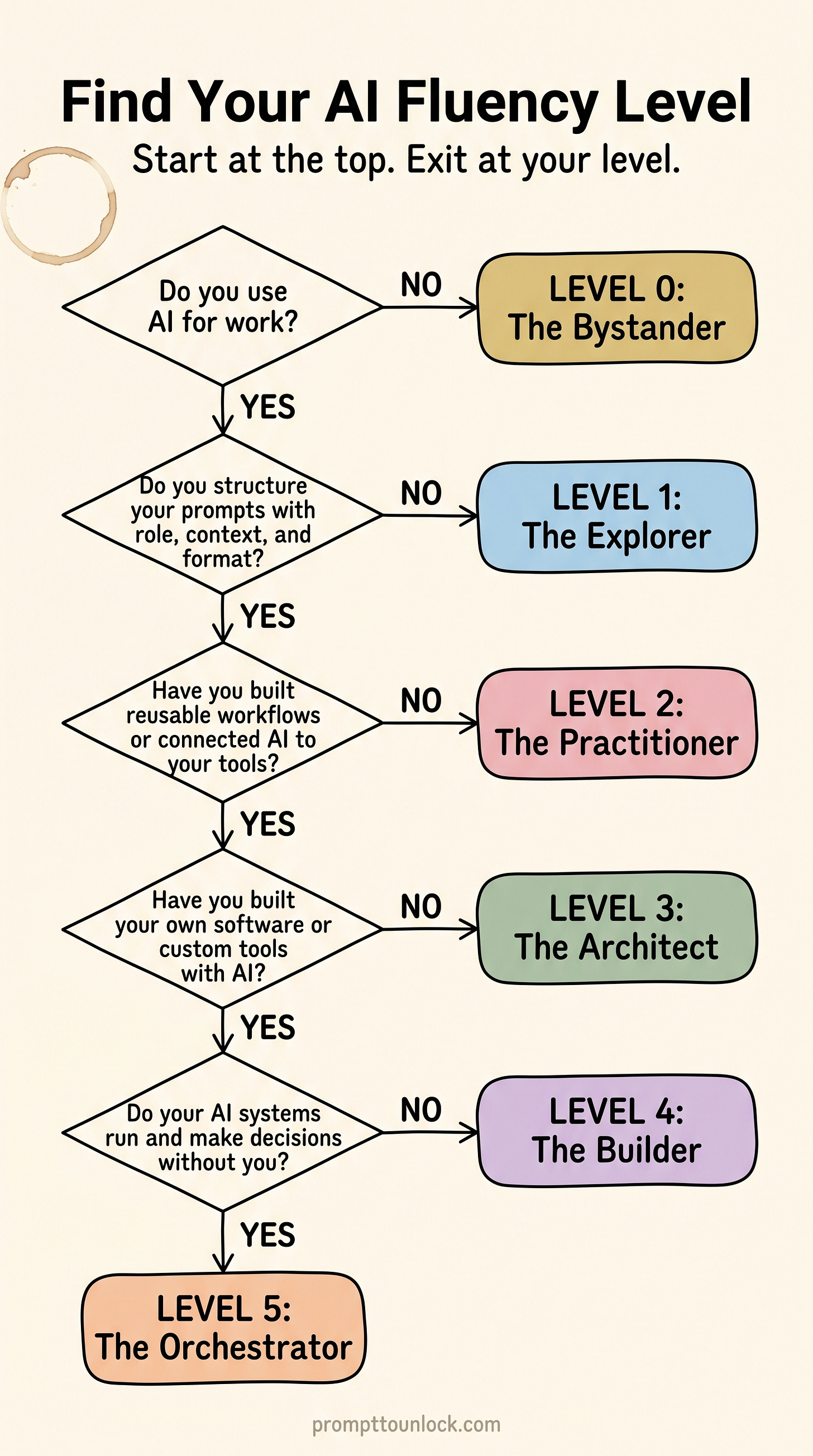

Curious where you land? Take the 5-question quiz now or keep reading.

That’s the staircase. Now let’s zoom in on each step.

Level 0: The Bystander

Not using AI, or tried it once and bounced.

You’re overcome with skepticism, overwhelmed, or just not seeing why it’s a big deal.

Level 0 is meaningfully different from every other level in that it’s a motivation and access barrier. Every other level is a skill barrier.

What this looks like:

The operations director who’s been told to “look into AI” by leadership but it hasn’t been a priority yet, so they’re scrambling to catch up.

There are also leaders who refuse because if they don’t use it, then it can’t replace them.

The professional who heard about it at a dinner party, tried asking it to do something vague, got a generic answer, and concluded “it’s just a fancy autocomplete”.

The person who’s genuinely concerned about data privacy and chose not to engage until they understand the risks, which is actually reasonable. They just need the right information to make an informed decision, not a blanket avoidance strategy.

Your next steps toward Level 1:

Pick one small task you already do regularly, like summarizing a meeting, drafting an email, or explaining a concept to a colleague and try it with ChatGPT, Claude, or Gemini.

Schedule yourself 15 minutes with a free tool. Have a conversation. Observe what it does and doesn’t do well.

Read one credible source on what AI actually is and how it works.

Recommendation: Large Language Models explained briefly

The danger:

Lack of adoption isn’t free, it’s just a delayed bill.

I’m sure you’ve heard the statement: “Workers won’t necessarily get replaced by AI, but those who use AI.” In that statement, you could swap AI with any new, major technology of the last 100 years and it’d be true.

Economists Autor, Levy, and Murnane studied 40 years of US labor data and found the same pattern at the worker level when computers were invented: workers who adopt it pull ahead, the workers who don’t get left behind.

And the gap between them compounds for decades. A follow-up study by Autor and Acemoglu showed that when computers complemented complex, non-routine tasks, the market’s valuation of educated workers skyrocketed. Following three decades of increase, the college wage premium hit a high water mark in 2008, meaning the average college graduate earned 97% more than the average high school graduate.

Now AI is the new computer.

Same pattern, but a much faster timeline. PwC’s 2025 AI Jobs Barometer found that workers with AI skills now earn a 56% wage premium (more than double last year’s 25%) over workers in the same job without them. And in industries most exposed to AI, wages are rising twice as fast as in industries least exposed.

Level 1: The Explorer

Uses AI casually, like a smarter Google.

You get value, but there’s no system, consistency, and/or understanding of why some prompts produce gold and others produce garbage.

What this looks like:

The marketing manager who asks ChatGPT “give me 5 subject lines for my email campaign” and uses whatever comes back. Sometimes it’s great, sometimes it’s unusable, and she can’t pinpoint why.

The account exec who summarizes articles before client calls but types one-sentence prompts and accepts the first response without ever pushing back.

The project manager who asks AI and Google the same question, compares answers, but never iterates on or refines the AI’s output.

Your next steps toward Level 2:

Learn the basics of structured prompting: give AI a role, context, a specific task, and a format. This alone will transform your results from coin-flip to predictable.

Start building a personal prompt library in your preferred structured format. Save your best prompts. Reuse and refine them.

Here is a paper on LLM impact from prompt formatting in 2024.

My personal preference is markdown. A simple text formatting system that is easy to use, easy to learn.

Formatting will matter less as models get better, but for now it’s an advantage.

Try iterating. Instead of accepting the first response, give feedback. Iterating can drastically improve quality.

Caveat: not all iterating helps. A 2025 study found that LLMs lose an average of 39% of their performance when users drift across turns without structure. Iteration works when it's directed, 'make this more concise, add a healthcare example', not when it's exploratory.

Start noticing patterns. When you get an output just right, ask yourself why. Was it more context? Better stated goal? That feedback loop is the bridge from Explorer to Practitioner.

The danger:

The ‘good enough’ trap.

Most AI users are at this level in the framework. According to OpenAI’s research, 45% of all work-related messages are either getting information, interpreting information, and/or documenting information. So, half of all usage is related to information processing and using AI like a smarter Google.

So why is this a danger? Aren’t those great use cases? Yes, they are.

But these use cases are the safest and most consistent uses of AI. You’re getting some value. Arguably, enough to feel like you’re an adopter, but it’s just enough to tread water.

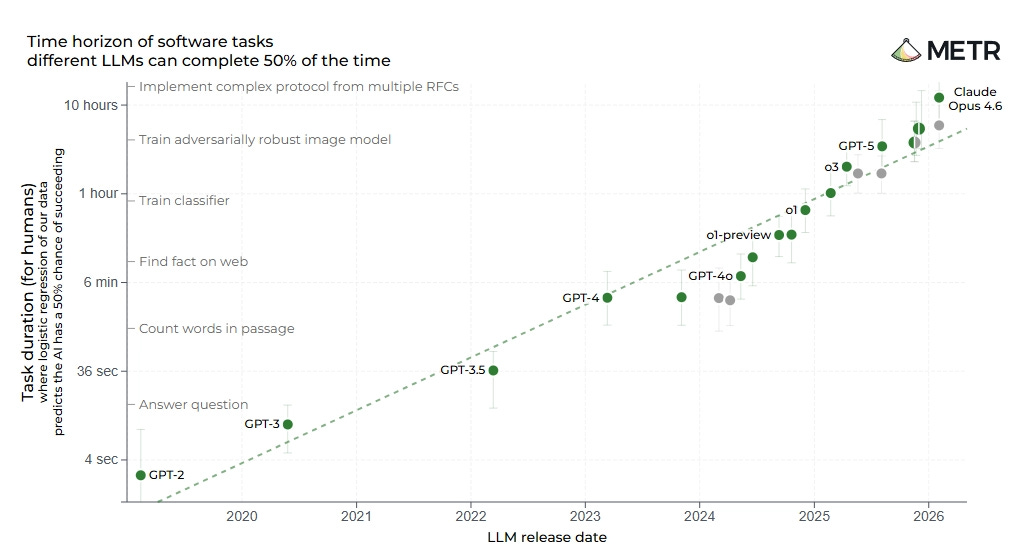

Think about how fast AI has been evolving. Today’s ‘good enough’ ceiling will be the floor in a year or two.

In fact, METR (AI evaluation lab) found that the length of tasks models can complete autonomously has been doubling every seven months for six years straight. And in the last year, that pace accelerated to every four months. That’s an exponential gain.

I know what you’re thinking: “If AI is improving that quickly, then can’t it eventually meet me where I’m at instead?” And that’s flawed thinking.

Because when the model improves, everyone's baseline results improve with it. What passes as impressive today becomes the default tomorrow. You’re intentionally limiting yourself.

Think about it this way: better models don't close the gap between skilled and unskilled users. They widen it.

The Explorer gets a better baseline result with their one-sentence prompt. The Practitioner gets a result that further exceeds the baseline with a structured, iterative prompt.

The model is the multiplier. Your input is what it multiplies. The premium on skill doesn't shrink as AI improves, it compounds.

Food for thought.

Level 2: The Practitioner

Has a structured prompting approach. Knows enough to use AI intentionally for work.

This is where AI stops being a novelty and becomes a reliable tool. You can delegate more creative and nuanced work with consistent, predictable results. You know when and how to iterate so that you can rise above the dreaded AI slop.

This is where my upcoming book, Prompt to Unlock, guides people.

What this looks like:

The HR partner who writes structured prompts with role, context, and constraints to draft policy documents and then has a process to iterate 3–4 times until the output matches her organization’s tone and compliance requirements.

The financial analyst who uses multi-turn conversations to walk AI through a dataset, asking clarifying questions, fact-checking conclusions, and produces a summary for his leadership deck. What may have taken days, now takes a couple of hours.

The content creator who has saved prompt templates for recurring tasks like “write a LinkedIn post in [voice] about [topic] with [specific structure]” and gets a mostly usable output on the first pass.

Your next steps toward Level 3:

Experiment with multi-step workflows and managing context: research → outline → draft → edit as four separate, sequential prompts instead of one big ask. The quality difference is significant.

Explore custom instructions or project-level context (available in ChatGPT, Claude, and Gemini) to give AI persistent knowledge about your role, preferences, and common tasks.

Explore tool-connected AI that accesses more of your actual data: calendar integrations, file search, database connections, Notion/Obsidian, etc.

For regulated industries like healthcare and finance, this step involves your compliance team and that’s worth starting early.

Start thinking about AI as infrastructure, not just a chat window. What recurring workflows could you automate end-to-end?

The danger:

Plateau by comfort. You’ve crossed the hardest gap in this framework, which is having the ability to repeatably get high-quality results from AI. And honestly, this puts you ahead of at least 50% of people.

And that’s the trap. Unlike Level 0 or 1, the ground won’t suddenly shift out from under you. As a Practitioner, you’re not falling behind.

But there’s an entire other level to this mountain you can’t see from this altitude: you’re still doing everything manually.

Every prompt is hand-typed. Every workflow handled adhoc. You’re saving hours, but you’re spending hours to save them.

The Architect automates what the Practitioner repeats. The difference is the practitioner is saving hours a week and the higher levels are saving weeks a year.

Personally, I was stuck here for over a year. I thought being good at prompting was enough.

I was getting decent outputs, and that was exactly the problem. I didn’t know what I was missing until I saw what an Architect could do.

Here is a list of things I was able to do by moving beyond Level 2. Each is a future guide on this blog.

Custom tools I ship in an afternoon: the survey below is an example.

A morning briefing delivered as audio while I make coffee: industry news filtered, calendar previewed, priorities surfaced, all without me opening a single app.

Pre-meeting briefings in my inbox 30 minutes before every call with the attendees, company, recent news, and my own notes.

Content atomization from a single source document, in my voice, with my guardrails.

Quarterly financials reported without me opening a spreadsheet. Transactions pulled, taxes estimated, anomalies flagged, digest in my inbox. I haven’t manually calculated revenue in over a year.

Level 3: The Architect

Builds repeatable AI workflows, not one-off prompts. Engineers context deliberately with custom scaffolding. Connects AI to tools and data sources.

Here’s the shift: at Level 2, you’re great at individual, adhoc AI prompting. You can effectively use AI out-of-the-box and can get to average outcomes pretty fast with AI at level 2.

But you have to spend extra time to take it beyond average. For some tasks, this means it isn’t even worth it to involve AI.

At Level 3, you stop thinking in one-off conversations and start thinking in systems. You learn to augment an AI’s capabilities with tools, data, and custom workflows. This shortens the time to an exceptional and custom outcome.

This is when AI starts to become infrastructure for how you work.

The defining question: are you still starting from scratch every time you open a chat or investing large amounts of time for a quality outcome? If yes, you’re still a Practitioner.

If you’ve built persistent context, connected tools, and repeatable workflows that carry forward across sessions, then you’re an Architect. And honestly, this level likely puts you ahead of 90% of people right now.

What this looks like:

The product manager who set up a Claude Project with custom instructions loaded with her company’s product specs, brand voice guide, and competitive landscape so every conversation starts with deep context instead of a blank slate.

The job seeker who built an AI-powered job search OS: one workflow researches target companies, another tailors their resume for each application, a third drafts personalized outreach. All feeding into an application tracking dashboard.

The small business owner who connected their CRM, email, and calendar through n8n so that when a new lead comes in… and AI is auto-prompted to research the company, draft a personalized follow-up, and schedules it in their outreach sequence. This turns a 20-minute manual process into a 2-minute review-and-send.

Concrete tools at this level: Claude Projects, Claude Cowork, ChatGPT Custom GPTs, Notion/Obsidian integrations, n8n (open-source, self-hostable), Zapier/Make for workflow automation, Google Apps Script for connecting spreadsheets to AI APIs.

Your next steps toward Level 4:

Ship something small first. One problem, one tool, one user (you). The best Builders iterate on real usage, not hypothetical features.

You must master prompt chaining: sequences where each prompt’s output feeds the next prompt’s input. This is the cornerstone for the bridge from “architect” to “builder.”

Start to learn a coding language like Python or Javascript.

Experiment with vibe coding: Claude Code, Cursor, Replit Agent, or Lovable. These are purpose-built for people who can describe what they want but don’t write code professionally.

Identify one problem you deal with every week that still needs to be manual. Then build one script, one automation, or one simple tool to ship for yourself before you ship it for anyone else.

Learn the basics of version control and deployment. AI can teach you this and it’s the difference between “I built a thing on my laptop” and “I built a thing other people can use.”

The danger:

This biggest risk you’ll have it trying to automate a process you don’t fully understand. This manifests in a couple of ways.

You’re trying to automate a process that you haven’t manually done yourself, at least a few times. Or you build elaborate systems for problems a single well-written prompt would solve (hammer, meet all of these hypothetical nails).

The signal you’ve crossed the line: you’re spending more time building/maintaining your AI ‘infrastructure’ than doing the work it was supposed to replace.

As your capabilities increase, your judgment on problem-solution fit must also be better.

Level 4: The Builder

Creates software and custom tools with AI assistance. Doesn’t just use what exists, builds what doesn’t.

At Level 4, you gain the ability to create the tools themselves. You speak enough of the language of technology to direct AI to build functional applications. And enough is less than you think.

The best part: you don’t need to be a junior developer to start. If you have a clear problem statement and the ability to describe what you want, then most of the time, you won’t need to touch code at all.

The coding basics you picked up help you understand what AI is building, but at this level, AI often writes the code for you. We’ve come that far.

What this looks like:

The solopreneur with a side business who used Claude to build a Python script that pulls revenue and expense data from Google Sheets, estimates quarterly tax liability based on their state and filing status, and emails them a formatted summary every quarter with what they owe. Total build time: one afternoon

The marketing director who built a custom Slack bot that monitors brand mentions across social channels, runs sentiment analysis, and posts a daily digest to her team. No engineering team required.

Alex Finn, a creator with no formal engineering background, used Claude Code to solo-build Creator Buddy, an AI-powered app that hit $300,000 ARR. He described what he wanted, iterated with AI, and shipped a production product in five months without writing a single line of code himself.

All of the evidence for this success is documented publicly on his social media and blog.

Your next steps toward Level 5:

Understand the basics of how high-quality agents function.

This is a great starting point: Thin Harness, Fat Skills by Garry Tan.

Start thinking about persistence. The tools you build at Level 4 run when you trigger them. Level 5 is about tools that run without you. What would it look like if your best tool monitored, decided, and acted on its own?

Learn to evaluate what you’ve built. Does it actually save time? Does anyone use it? The skill at this level isn’t building, but the judgment to know what’s worth building.

Learn what it takes to secure AI-built tools and agentic workflows.

The danger:

Bad judgment and blind spots. The discipline at Level 4 isn’t building. It’s the willingness to grind enough to make the skill actually useful.

My top three rules for being a builder with AI.

Rule #1: don’t build without purpose.

The dopamine of shipping with AI is real, and it’s easy to blow through millions of tokens tinkering aimlessly (trust me). Paying to play is fine. Just remember vibe coding is a skill like anything else.

My challenge: are you learning or earning? Pick one. If you're doing neither, you're just paying to play (hello new version of microtransactions).

You could ship a new tool every weekend and never open most of them.

Rule #2: don’t ship code you don’t understand.

AI makes mistakes. You don’t need to be able to write all the code, but you should be able to understand what it’s doing. This starts as simple as understanding what every file does and why.

My challenge: can you explain what your code does in plain English? If not, you can't ship it.

Rule #3: don’t build without guardrails.

And it's not just the code. The AI itself can take wrong actions.

When you’re creating tools that touch real data like API keys, financial records, customer information, you’re also creating opportunity for hackers.

Not to mention the actions of the AI itself. You should never blindly approve what AI wants to do. You don’t want to be this person (and make no mistake, this was a people problem, not AI).

Security and safety aren’t optional if you’re a builder. It's the price of admission.

Build assets, not liabilities.

Level 5: The Orchestrator

Deploys autonomous AI systems that observe, decide, and act without you in the loop for every decision.

Here’s the critical distinction between these latter levels and how they build on each other:

At Level 3, you learn to manage context and how to improve AI outcomes. But you’re still largely in the loop, reviewing output, managing steps, and triggering workflows manually.

At Level 4, you can build your own custom tools that automate manual steps or solve problems no existing tool addresses.

At Level 5, you build systems that can mimic your judgment and taste. They observe conditions, evaluate what matters, and take action on their own within guardrails you define.

In other words, level 5 is when AI can confidently act while you sleep.

Every level is essentially decreasing the time it takes you to get to a quality outcome and increasing how much of that time doesn’t require you at all.

What this looks like:

The solopreneur running an OpenClaw agent that scans six email accounts every hour, filters out noise, summarizes what’s important, drafts responses, and sends them to their messaging app for approval. And sends a morning briefing with task priorities, weather, and calendar pulled from their project management system, which is delivered as an audio file while they make coffee.

The founder who deployed agents that monitor competitor pricing, industry news, and customer feedback across multiple channels 24/7. Surfacing only the signals that require a human decision, auto-filing everything else.

The developer who built a support system where one agent triages incoming tickets, a second drafts responses using the company knowledge base, and a third escalates edge cases to humans. All three running overnight, working autonomously to handle a queue that would take a team of three.

The honest caveat: This is the frontier. Most people operating at Level 5 today are deeply technical, and the tooling is evolving fast.

Frameworks like OpenClaw (an open-source agent orchestrator that hit 100,000 GitHub stars in its first week) are making this more accessible, but it still requires a significant time investment for setup, security awareness, and ongoing maintenance. It’s here because it’s where the technology is heading, and knowing the destination changes how you think about Levels 2, 3, and 4.

Where to go from here: At this level, the “next step” isn’t climbing another rung. It’s pushing the boundary itself through open-source contributions and/or building products that extend what agents can do, or designing the guardrails that make autonomous systems trustworthy.

Just keep building.

The danger:

Agent drift.

Autonomous systems don’t crash, their reliability decays. They start being subtly wrong, and because no human is in the loop for every decision, it compounds before anyone notices. “Set it and forget it” becomes “set it and regret it.”

At Level 5, the work isn’t on rails, it’s unpredictable. So your main job becomes designing the guardrails and evaluation loops that catch drift before it becomes damage.

This level takes serious skill. Not just to build, but to maintain responsibly.

The Honest Assessment

If you think about where we are collectively, the numbers are sobering.

DataCamp’s 2026 report (conducted with YouGov) found that only 17% of employees use AI frequently, despite 42% expecting their role to change significantly because of it within the next year. Meanwhile, 34% of workers feel unprepared for AI-driven changes, and 42% say their employer expects them to figure it out on their own.

Translation: most of the workforce is at Level 0 or Level 1. And most organizations are shrugging and saying “go learn AI” without giving anyone any guidance.

But here’s the flip side, and this is the part that matters. Pairing a time investment with structured upskilling programs is nearly twice as likely to see significant ROI from AI.

So if you’re at Level 0? Your next step is trying one task. (Scroll back up to The Bystander, it’s right there.)

If you’re at Level 1? Your next step is learning to structure a prompt. (It’s in The Explorer section.)

Every level has a next step. And if you don’t know yours, you can take the below quiz.

Find Your Level: The 5-Question Self-Assessment

Below is a link to a survey built with AI. This is a good example of something small that a practiced Builder can do.

Short on time? Here is the 15-second version of the survey.

What Comes Next

Now you know where you stand. The question is whether you do anything about it.

Every level above has a “next steps” section written for exactly where you are. Scroll back up to your level and pick one action. Not three. One.

If you landed at Level 0 or 1 and want structured help getting to Level 2, that’s exactly what my upcoming book Prompt to Unlock is built to help you achieve. Coming later in 2026.

You can also follow along as I build this framework out into deeper dives into each level, tool breakdowns, real workflows, practical guides, etc. This blog is where it will all live.

And I’m curious: where did you land, and did it surprise you? Drop a comment below or find me on X.

This article is for informational purposes only and does not constitute professional advice. Please consult with qualified professionals before making career, business, or technology decisions.

Sources:

Locke, E.A. & Latham, G.P. (2002). “Building a Practically Useful Theory of Goal Setting and Task Motivation.” American Psychologist.

Dreyfus, S.E. (2004). “The Five-Stage Model of Adult Skill Acquisition.” Bulletin of Science, Technology & Society.

DataCamp/YouGov (2026). “The State of Data & AI Literacy in 2026.”

Finn, A. (2025). “How to Build Your First App with AI.” alexfinn.ai

Autor, D., Levy, F., & Murnane, R. (2003). “The Skill Content of Recent Technological Change: An Empirical Exploration.” Quarterly Journal of Economics.

Acemoglu, D., & Autor, D. (2011). “Skills, Tasks and Technologies: Implications for Employment and Earnings.” Handbook of Labor Economics.

Michaels, G., Natraj, A., & Van Reenen, J. (2014). “Has ICT Polarized Skill Demand? Evidence from Eleven Countries Over 25 Years.” Review of Economics and Statistics.

Chatterji, A., Cunningham, T., Deming, D. J., Hitzig, Z., Ong, C., Shan, C., & Wadman, K. (2025). How people use ChatGPT (NBER Working Paper No. 34255). National Bureau of Economic Research. https://www.nber.org/system/files/working_papers/w34255/w34255.pdf

Kwa, M., West, B., et al. (2025). Measuring AI Ability to Complete Long Tasks. METR. https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/

He, J., Rungta, M., Koleczek, D., Sekhon, A., Wang, F. X., & Hasan, S. (2024). Does prompt formatting have any impact on LLM performance? arXiv. https://arxiv.org/abs/2411.10541

PwC (2025). The Fearless Future: 2025 Global AI Jobs Barometer. https://www.pwc.com/gx/en/issues/artificial-intelligence/job-barometer/2025/report.pdf

Laban, P., et al. (2025). LLMs Get Lost In Multi-Turn Conversation. arXiv:2505.06120